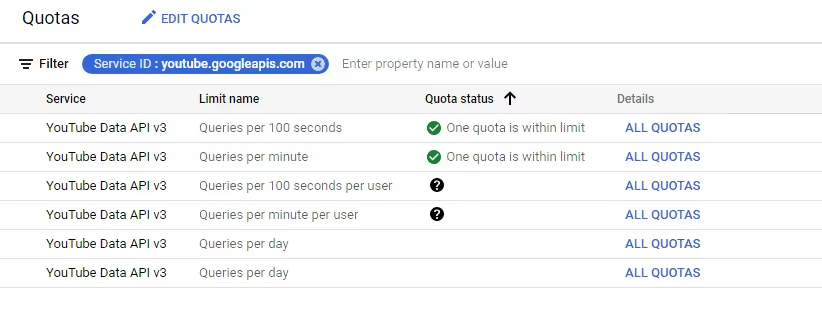

Understanding YouTube API limits in 2026 means knowing how the YouTube Data API v3 quota system works, how each request costs units, and when to request higher limits. By default, each Google Cloud project gets 10,000 units per day, resetting at midnight Pacific Time. If your app exceeds this limit, it stops making API calls until the quota resets or you increase it. This guide explains how to calculate your YouTube API usage, understand the cost of different operations, and fix “quota exceeded” errors so your integration runs reliably at scale.

Recommended Reading:

- How to Get YouTube API Key

- Unlocking Success With YouTube API For YouTube Shorts

- How To Use YouTube API To Upload Videos

- Guide On How To Use YouTube Live Streaming API

- What Is Youtube Analytics API? How it Delivers Insights

What is YouTube Data API Quota Limit & its Usage?

In 2026, YouTube Data API v3 continues to use a quota‑unit system, with each Google Cloud project starting at 10,000 units per day.

YouTube API quota is the number of units your application can use per day for API requests. By default, each Google Cloud project gets 10,000 units per day, which resets at midnight Pacific Time. This means you can use the API for free, as long as your application stays within the daily quota limit.

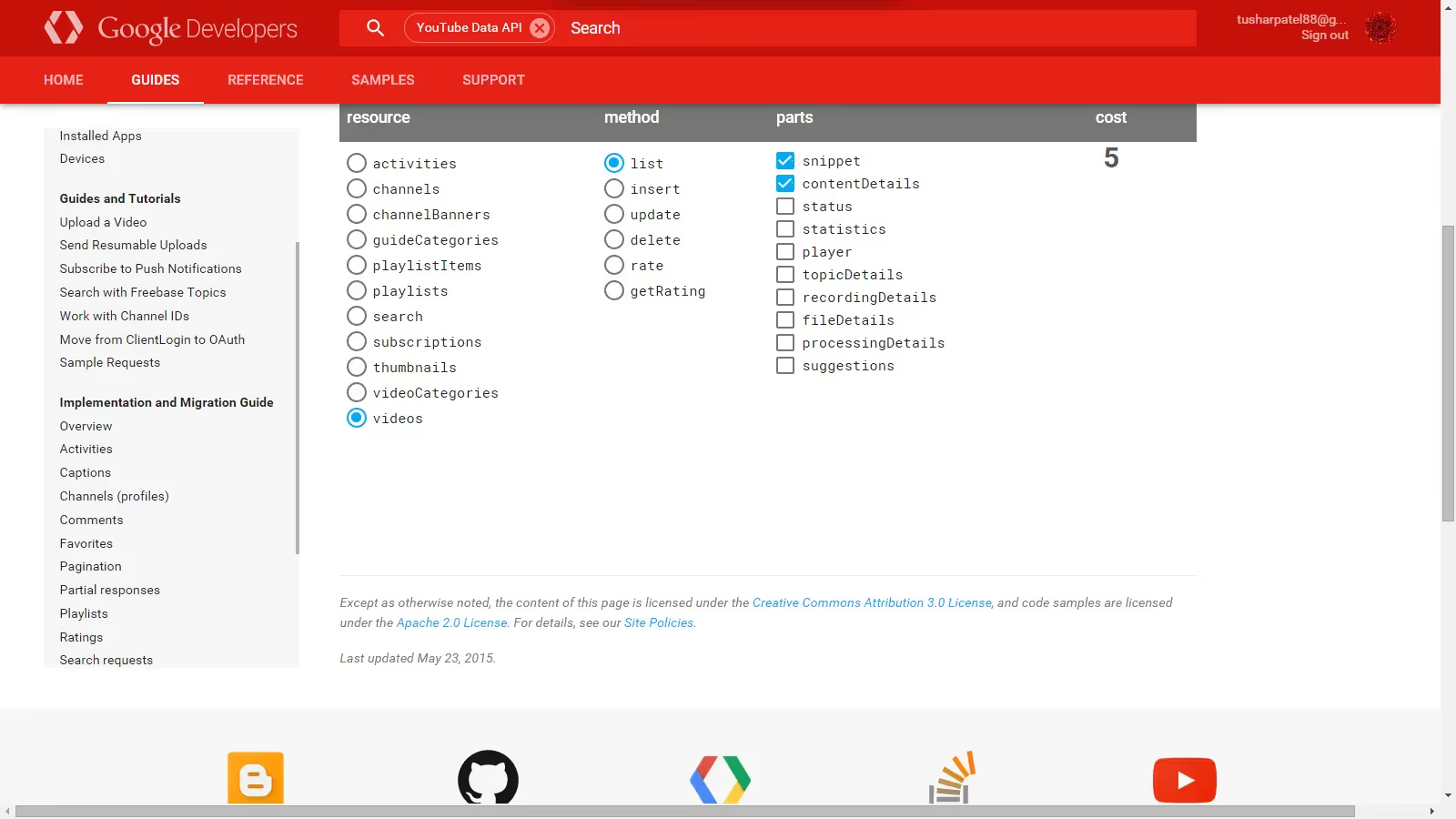

Each API request consumes units depending on the operation. For example, read operations cost 1 unit, search requests cost 100 units, write operations cost 50 units, and video uploads can cost up to 1600 units.

For instance, if your application fetches 100,000 comments (100 units), deletes 4,000 comments (4,000 units), and lists 100 videos (2,000 units), the total usage would be 6,100 units, leaving 3,900 units remaining for the day.When utilizing the YouTube API, it's important to be mindful of the imposed restrictions on the number of requests you can make using your YouTube Data API Key. If your usage exceeds this limit, you will encounter a “YouTube API quota exceeded” error, which temporarily blocks further API requests. To avoid this, it is important to track unit consumption and optimize API calls using techniques like reducing unnecessary requests and caching responses. The usage of the YouTube API is a crucial aspect to consider when integrating social media APIs into your applications.

How to Calculate YouTube API Cost Usage?

When you integrate YouTube into your applications through API, you must know the limits of API and how to calculate the cost associated with its usage. It is important to note that the term "cost" here doesn't pertain to monetary charges; it refers to the API usage limits. First, let's understand some terminologies:

- Quota: The number of API calls an application can make within a specific time frame.

- Units: The YouTube API employs a quota system based on units. Different API requests consume different amounts of quota units.

By default, the YouTube API quota limit is 10,000 daily units for each Google Cloud project. The daily quota resets at midnight Pacific Time. To determine the cost of different actions, Google provides a calculator. It will help you estimate how many units are consumed by different types of requests. Here are some examples of how units are consumed:

- Deleting, reporting or hiding a comment costs 1 unit.

- Checking for the success of comment deletion costs 1 unit.

- Fetching each set of 100 comments consumes 1 unit.

- Listing each set of 5 recent videos uses 100 units.

For instance, if your application deletes 500 comments and fetches 300 comments, the total quota used would be 500 units for deletion and 3 units for fetching comments, which sums up to 503 units. In cases where your application is handling large volumes of data and you worry about the YouTube API quota exceeded, you can optimize by disabling certain settings, such as disabling the check for comment deletion success, as this consumes extra units.

Now, what if your application needs more than the default 10,000 units daily? There is a process to request an API quota increase through an elaborate form, which might be granted especially for large channels. Having a YouTube Partner Manager may also facilitate this process.

There's also a workaround to the quota limit by creating multiple Google Cloud projects. Each project has its own YouTube quota limit. Essentially, you can switch between different client_secrets.json files (which act as API keys) when one project's quota is depleted. This is achieved by renaming the files to something like client_secrets1.json, client_secrets2.json, and so forth.

Let's look at a test case scenario:

Imagine you have a social media API integration which scans 100,000 comments (100 units), deletes 4,000 comments (4,000 units), and lists 100 recent videos (2,000 units). This would consume a total of 6,100 units. With the default quota of 10,000 units, you would still have 3,900 units left for the day. Being knowledgeable about how the YouTube API limit operates and how to calculate the quota consumption is crucial in the effective management of social media search API integration in applications. This ensures that you make optimal use of the resources available without running into quota limitations.

How to Fix Exceeded YouTube API Quota Limit

When embarking on a project that involves YouTube API integration, it is vital to be conversant with the quota limits. Once the YouTube API quota exceeded message is encountered, quick action is required to prevent service interruption. This section expounds on rectifying this issue, following the steps to create a YouTube API key and managing the quota.

Imagine a scenario where a social media search API is integrated into a website, pulling in YouTube videos. Suddenly, the videos cease to populate, and the dreaded YouTube API quota exceeded message appears. To address this, the first course of action is to create a new YouTube API key. The process begins by visiting the Google APIs library.

For illustrative purposes, picture a library with endless shelves of books. One shelf is labeled ‘YouTube Data API v3’ and needs to be selected. If there's no existing project associated with the account, clicking ‘Enable’ will prompt the creation of a new project. Name the project descriptively, such as ‘YouTubeVideoIntegration’. It's like naming a book based on its content, making it easier to locate later.

Once the project is active, credentials for the YouTube API key are required. Envisage credentials as a library card, granting access to specific resources. On the credentials page, opt for ‘Web server’ based calls and ‘Public data’. Select ‘What credentials do I need?’ and the API key will be revealed.

For security purposes, restrict the key’s usage. Imagine giving a library card to a friend; it is wise to limit the kind of books they can check out on one’s behalf. Restrict the key by enabling HTTP referrers, inputting the domain, and ensuring that the key is only utilized for YouTube Data v3 API calls.

Upon completing these steps, insert the new key into the plugin’s API text and save it. This can be likened to bookmarking a page for easy access in the future.

After the new API key is set, it might still be necessary to request a higher daily limit. This is akin to a regular library patron who reads voraciously, and thus requests an extension of the borrowing limit.

To proceed, log into the Google account associated with the YouTube API key, and extract the Project ID and Number. Think of this as acquiring a special library card number for privileged access. Armed with this information and a valid justification for the higher quota, fill out this application form.

Efficiently Utilizing the YouTube API Quota Limit

Understanding the API limit efficiently is paramount to ensuring continuous integration without hitting the ceiling. This section will delve into how to maximize the quota limit for a seamless experience with the YouTube Data API v3.

The Need for Efficiency

One might think that integrating the latest YouTube video into a website should be simple, but that is not the case. When using YouTube's embedded player, which relies on an older AJAX API, it fetches an extensive amount of data. Imagine this as trying to obtain a book from a library, but instead, getting an entire shelf delivered.

However, YouTube Data API v3 provides a more streamlined approach. Picture the modern library systems where one can efficiently search for and borrow only the required book. It supports querying specific data and limiting the number of results, thus saving on the quota.

Prudent API Calls

To fetch the latest video, for instance, a call to the /search endpoint is necessary, which costs a minimum of 100 API units. The endpoint can be seen as a special library counter where one requests information. With only 10,000 units available per day, efficient calls are crucial. Imagine having a limited number of library visits per day; one would want to make each count.

The YouTube API quota exceeded message can also be a common sight for developers frequently visiting their sites. Therefore, it's essential to evaluate the needs and decide on the frequency and necessity of the data fetched.

Building an API Proxy Cache

One approach to maximizing efficiency is to build an API proxy cache. This can be visualized as creating a mini-library at home, storing copies of frequently accessed books, thus reducing the need to visit the main library.

Youtube API limit: What it Entails

The API proxy cache could comprise two PHP files: one storing credentials and the other containing functional code. This solution must operate seamlessly with the website's database, querying the YouTube API and storing the results in a database.

MySQL 5.7 introduced a JSON datatype, which can be utilized here for efficient storage and retrieval. The data type appears as text, but the server understands queries to data stored inside the JSON blob. Through the use of VIRTUAL columns and indexes, one can efficiently query the JSON data, just like having a well-organized mini-library at home.

Refreshing the Cache

By default, each element in the API cache could refresh every 3600 seconds. However, customizing this to specific query needs is feasible. This is akin to periodically updating the collection in the mini-library.

Best Practices to Optimize YouTube API Usage

- Fetch only required data instead of full responses

- Reduce frequency of API calls (avoid real-time calls when not needed)

- Cache frequently used responses to minimize repeated requests

- Use efficient endpoints and limit result sizes

- Refresh cached data at controlled intervals (e.g., every 1 hour)

Conclusion: Optimizing YouTube API Integration

Efficient YouTube API integration requires a clear understanding of quota limits and how API requests consume units. By tracking usage, reducing unnecessary calls, and implementing caching strategies, developers can avoid “YouTube API quota exceeded” errors and ensure reliable application performance.

Using techniques like optimized API calls and proxy caching not only reduces quota consumption but also improves response time and overall system efficiency. This ensures that critical data—such as videos, channel details, and metadata—is fetched consistently without interruptions.

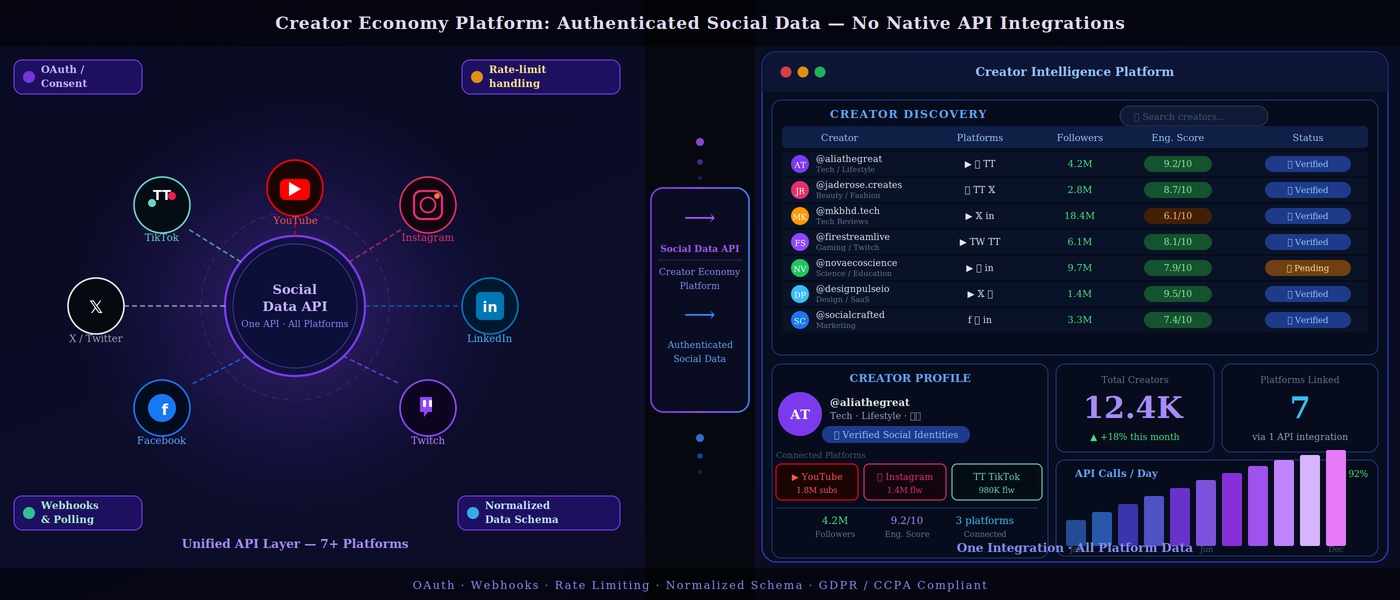

For teams looking for a more scalable solution, platforms like Phyllo simplify YouTube API integration by providing access to creator data, content metrics, and audience insights through a unified API layer. This reduces the complexity of managing quotas and enables faster, more reliable data access.

Also read: How Beacons uses Phyllo to create the perfect media kit for creators to be successful

Why Choose Phyllo for YouTube API Integration?

Managing YouTube API quotas, maintaining data consistency, and scaling integrations can quickly become complex for developers. Phyllo simplifies this by providing a unified API to access creator data, content metrics, and audience insights—without the need to manage quota limits, caching layers, or multiple integrations manually.

Phyllo delivers reliable, API-direct data with real-time synchronization, ensuring your application always works with accurate and up-to-date information. With built-in webhooks, developers can receive instant updates whenever creator data changes, reducing the need for repeated API calls and helping optimize quota usage. It also provides complete audience insights similar to creator dashboards, along with frequent data refresh cycles (often within 24 hours).

Phyllo is built to support scalable use cases across influencer marketing platforms, creator tools, automated verification systems, as well as fintech and Web3 applications. Instead of spending time managing API limits and infrastructure, teams can focus on building and scaling their core product.

If you're building applications that rely on YouTube data, Phyllo helps you avoid quota limitations, simplify integration, and scale faster. Explore Phyllo to streamline your YouTube API integration today.

FAQs:

1. What is the YouTube API quota?

YouTube API quota is the daily usage limit assigned to each Google Cloud project, measured in units. By default, each project gets 10,000 units per day, and every API request consumes a specific number of units based on the operation performed.

2. How to calculate YouTube API cost?

YouTube API cost is calculated by adding the units consumed by each request. For example, fetching 100 comments costs 1 unit, while listing videos costs around 100 units and uploading a video can cost up to 1600 units. Your total usage must stay within the daily quota limit.

3. What is the daily quota limit for the YouTube Data API?

The default daily quota for the YouTube Data API is 10,000 units per project, and it resets at midnight Pacific Time. This limit can be increased by submitting a quota extension request through Google Cloud Console.

4. What happens if you exceed the YouTube API quota?

If you exceed the quota, your application will stop making API requests until the quota resets or additional quota is approved. This can cause failures in fetching or updating data, impacting application functionality.

5. How to fix the YouTube API quota exceeded error?

You can fix this error by waiting for the daily reset, reducing API usage, enabling caching, or creating a new API key. For long-term solutions, you can request a quota increase through Google Cloud Console.

6. How can you reduce or optimize YouTube API usage?

To optimize API usage, minimize unnecessary API calls, cache frequently requested data, batch requests where possible, and avoid resource-intensive operations. These strategies help reduce unit consumption and prevent quota exhaustion.

7. Can you increase the YouTube API quota?

Yes, you can request a quota increase by submitting a request in the Google Cloud Console. You’ll need to provide project details, expected usage, and a valid business justification for approval.

8. How have YouTube API limits changed in 2026?

As of 2026, the YouTube Data API v3 still uses the same quota‑unit system, with 10,000 units per day by default and unit costs between 1 and 1,600 per request. The main change is stricter enforcement and more frequent quota checks for high‑volume or abusive projects, so developers should monitor usage and request increases early.

.avif)